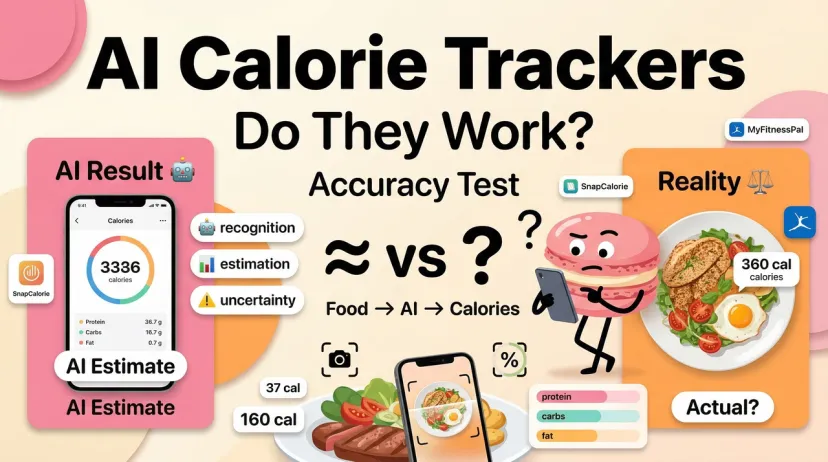

Do AI Calorie Trackers Actually Work?

I'll be honest with you: I went into this with low expectations.

I'd tried manual calorie tracking twice. Both times I quit within three weeks — not because I didn't care, but because logging "homemade stir fry with whatever was left in the fridge" into a database is an exercise in guessing and then feeling vaguely bad about the guess. When people started talking about AI calorie trackers that could just read a photo, I was interested and skeptical in equal measure.

So I actually tested them. And the answer to "do they work" is more nuanced than the marketing implies — in both directions.

What AI Calorie Trackers Claim to Do

The promise: faster, more accurate food logging

The core pitch is this: instead of searching a database, finding the closest entry, estimating how much you ate, and hoping the entry was accurate in the first place — you take a photo, and the AI does the rest.

That's the appeal. Manual calorie logging is slow, tedious, and honestly not that accurate anyway. Research published in the New England Journal of Medicine found that manual self-reporting typically underestimates calorie intake by 30% or more. So the bar isn't "is AI perfect" — it's "is AI better than what most people are actually doing."

How they work (photo recognition, text input, databases)

Most AI calorie trackers combine two things: an image recognition model that identifies what's in the photo, and a nutritional database that pulls the values for those identified foods.

The image recognition handles the "what is it" question. The database handles the "how many calories" question. Portion estimation — "how much of it" — is the third piece, and it's the hardest. Some apps use depth sensors or volumetric analysis to estimate portions more precisely. Most use a combination of visual inference and asking you to confirm or adjust.

Text and barcode input exist as fallbacks for when photo recognition isn't the right tool — packaged food with a barcode, or a complex recipe where you know exactly what went in.

Where AI Calorie Trackers Actually Deliver

Common foods with large database coverage

For standard, recognizable foods — a chicken breast, a bowl of oatmeal, a banana, a packaged snack — AI calorie trackers are genuinely good. A 2025 University of Sydney study evaluating 18 AI-powered food apps found top performers achieving 92–97% accuracy for identifying foods from photos. That's for clear, single-item or simple-plate photos under reasonable conditions.

Packaged food with a barcode is the most reliable scenario of all — the calorie count is on the label, the AI reads it, accuracy is close to 100%. This is where apps like Cronometer (nutritionist-verified database) and MyFitnessPal (14M+ entries) are genuinely strong even before any AI recognition layer.

Speed advantage vs manual entry

This part is real. Photo logging takes about 10 seconds. Manual database search, entry, and portion estimation takes 3–5 minutes for a simple meal and longer for anything complex. Apps with AI recognition use voice inputs, natural language commands, and image identification to log calories automatically — and the consistency benefit compounds over time. You're more likely to log a meal if it takes 10 seconds than if it takes 5 minutes.

A 12-month study of users found that AI-assisted tracking led to 23% better adherence to nutritional goals compared to manual methods. The mechanism isn't that AI is more accurate — it's that lower friction means more consistent logging, and consistent logging produces better data than sporadic accurate logging.

Pattern recognition and trend tracking over time

Where AI trackers genuinely add value beyond a simple food database is in the pattern layer. Most apps now surface weekly summaries: your protein was consistently low on weekdays, you tend to go over on Saturdays, your fiber intake looks fine. This kind of observation requires logging consistently for long enough to have data — which is exactly what the speed advantage enables.

Where They Still Fall Short

Mixed dishes and home cooking accuracy

This is the failure mode I hit immediately. AI apps struggle significantly with homemade meals, averaging only 50% accuracy in testing. A pasta dish with a homemade sauce, a stew that's been cooking all day, a stir fry with seven different vegetables and varying amounts of oil — the AI is guessing more than analyzing.

The problem is layered. First, the AI has to correctly identify every component in a mixed dish. Then it has to estimate how much of each component is present. Then it has to account for cooking method — the same chicken breast has meaningfully different calorie counts depending on whether it's grilled, pan-fried in oil, or braised in liquid. Miss any of those steps and the output is wrong, sometimes significantly.

This isn't a solvable problem with better AI. It's a fundamental constraint of visual estimation. As NYU Tandon researchers noted, "the same dish can look dramatically different based on who prepared it" — a burger from one restaurant bears little resemblance to a homemade version.

Portion size estimation errors

Portion size is where the most meaningful calorie errors happen. The visual difference between 100g and 150g of rice on a plate is not obvious to a human eye, and it's not obvious to an AI model either. A difference of 50g in cooked rice is roughly 65 calories — not catastrophic on its own, but systematic underestimation across three meals a day adds up.

Apps that use depth sensors (SnapCalorie uses LIDAR) or ask you to specify plate size before analyzing can narrow this gap. Apps that rely purely on a flat photo are making informed guesses on every serving size.

Photo recognition limits with certain foods

AI recognition struggles with a few specific categories regardless of quality:

Visually similar foods with very different calorie counts. Grilled salmon and grilled chicken look similar on a plate. Cauliflower and white rice can look identical once cooked. The AI identifies a food category — "white grain on a plate" — and the specific identification determines the calorie output.

Non-Western cuisines. The University of Sydney study found that AI apps struggle significantly with mixed dishes from non-Western cuisines, particularly Asian foods. Most recognition models were trained primarily on Western food imagery, which means they're less reliable for dishes that don't have a close visual match in the training data.

Foods with high liquid or sauce content. A bowl of soup or a sauced dish hides its components. The AI can see the surface; it can't see what's underneath or how much liquid is in the bowl.

AI vs Manual Calorie Tracking: Honest Comparison

Accuracy

Neither is perfectly accurate, and the comparison is more nuanced than "AI is better."

For packaged foods and simple meals: AI matches or beats manual entry, because manual entry is only as good as the database entry you find and select, and user-submitted databases have significant error rates.

For homemade and mixed dishes: manual entry by someone who knows exactly what went into the recipe (and weighs ingredients) is more accurate than AI photo estimation. The problem is most people don't do that — they estimate, pick the closest database entry, and move on.

Realistically: SnapCalorie's 16% mean error rate has been verified by published data. That means on a 500-calorie meal, the estimate might be off by 80 calories. A 20% error in calorie counting can mean the difference between weight loss and maintenance — for a 1,500-calorie diet, a 20% error equals 300 calories, enough to prevent a half-pound weekly loss.

Time investment

Manual entry: 3–5 minutes per meal minimum, longer for complex dishes. AI photo logging: 10–30 seconds.

This gap has real behavioral consequences. The tracker you actually use consistently beats the more accurate tracker you use sporadically.

Who benefits more from each approach

AI tracking is better for: People who've quit manual tracking before due to friction. Anyone who eats a lot of packaged foods or restaurant meals. People whose primary goal is general awareness rather than precise macro hitting.

Manual entry is better for: Anyone managing strict calorie targets where accuracy matters. People who batch cook and can weigh ingredients. Users following clinical dietary protocols where ±15% errors have meaningful consequences.

Which AI Calorie Tracker Is Worth Trying

Best overall: Cronometer

Not the flashiest AI, but the most reliable data. Cronometer uses a nutritionist-verified food database — not crowdsourced entries — which means when you manually log a food, the numbers are accurate. Free tier includes unlimited logging with no daily cap, full macro and micronutrient breakdown, and a barcode scanner. The AI photo recognition is available on the paid Gold tier ($8.99/month). For most people, the free manual logging with a verified database produces better outcomes than AI photo estimation from an unverified one.

Best free option for photo logging: Welling

Welling's AI-based chat and photo recognition logs meals conversationally — describe what you ate or photograph it, and it estimates the breakdown. The free version offers limited features before hitting the paywall, but the 7-day trial on paid tiers is enough to test whether the format works for you. Strong coverage for international and mixed dishes, which is a real gap in most competing apps.

Best for photo logging accuracy: SnapCalorie

SnapCalorie's 16% mean error rate is the most independently verified accuracy figure in the category. It uses LIDAR depth sensing and volumetric measurement rather than flat photo analysis for portion estimation, which narrows the error range on portion size. Free tier includes 3 AI photo scans per day — enough to test the tool across your actual meals before deciding whether to upgrade.

Verdict

AI calorie trackers work — but not in the way the marketing usually implies.

They work because consistency beats precision, and lower friction means more consistent logging. If you've tried manual tracking and quit, the speed advantage of photo logging is a real behavioral improvement.

They don't work as precision instruments. Homemade meals, mixed dishes, and portion size estimation all carry meaningful error margins. If you're trying to hit exact macro targets for a specific performance goal, AI photo recognition alone isn't reliable enough. You'd want to combine it with manual verification on key meals or use a weighed-ingredient approach for recipes you cook repeatedly.

The honest version: for most people eating mostly standard meals, AI tracking is accurate enough to produce useful trend data and good enough to beat the alternative of not tracking at all. For anyone needing clinical-level precision, it's a starting point — not a substitute for working with a registered dietitian on a verified plan.

Tracking tells you what you ate. The harder question is what to cook next — in a way that actually fits what you've been eating, what you have in the kitchen, and what you're in the mood for. At Macaron, users build tools like a personal recipe generator that takes your ingredients and turns them into something worth cooking — with a history that remembers every combination you've tried. Try it free.

FAQ

How accurate is AI calorie tracking really?

It depends on the food. For simple, identifiable foods and packaged items, top apps achieve 92–97% accuracy per the University of Sydney research. For homemade meals and mixed dishes, independent testing puts accuracy closer to 50%. The average across all meal types sits somewhere between those extremes. A verified error figure from published data: SnapCalorie's mean error rate is 16%, meaning an 84% average accuracy across the meals in its testing set.

Can I trust AI calorie counts for weight loss?

With calibrated expectations, yes. The most important thing AI tracking does isn't produce perfect numbers — it produces consistent numbers. Consistent tracking, even with some error margin, generates trend data you can act on. If your weight isn't moving in the direction you want despite consistent tracking, you can adjust based on the pattern. If you need clinical-level precision for a medical nutrition goal, pair AI tracking with professional guidance rather than relying on it as a standalone tool.

Related Articles: