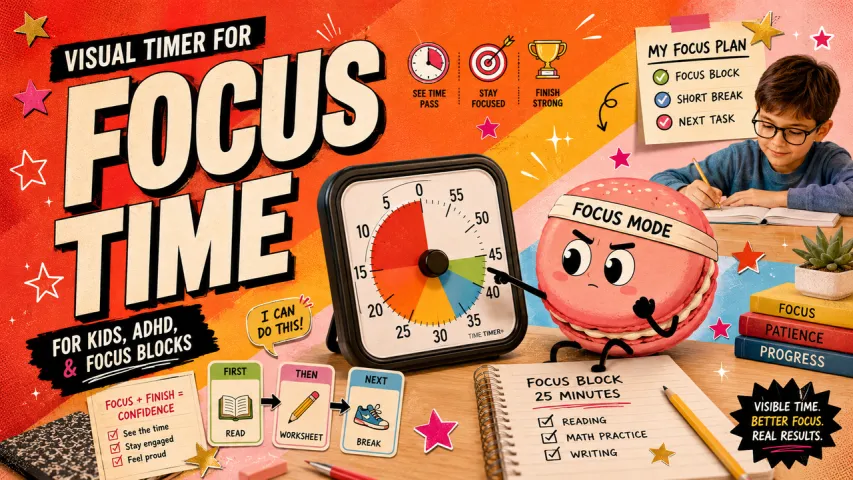

How to Build Your First OpenClaw Skill: Template, Testing, and Publishing Guide

Hi, I'm Anna. I spent three afternoons last week trying to build my first OpenClaw skill. Not because it's complicated — the actual structure is surprisingly simple — but because I kept hitting small frictions that weren't in the docs. Things like: which metadata field actually works (spoiler: not the one you'd guess), how to test without restarting the entire agent every time, and why my skill kept showing up as "instruction-only" even though I had scripts.

This isn't a heroic origin story. It's what actually happened when I sat down to solve a small problem: I wanted OpenClaw to check my calendar each morning and drop a summary in a specific format I could paste into my notes.

Pick the right first use case

Start with something you'd actually use. Not "something impressive to show off" or "a good portfolio piece." Pick the smallest friction that genuinely annoys you.

For me, it was the calendar thing. For someone I talked to on the OpenClaw Discord, it was auto-formatting meeting notes. For another person, it was checking server status without SSHing in manually.

The sweet spot is: simple enough to finish in a day, useful enough that you'll notice if it breaks. If your first skill takes two weeks to build, you won't finish it. If it's not something you'll use daily, you won't maintain it.

Scaffold your skill

Using the official skill template

Here's what tripped me up: there isn't one official template file you download. The structure lives in documentation and examples, and you piece it together yourself. — mostly scattered across the**** official skills documentation and the**** creating skills guide — and you piece it together yourself.

Every skill is just a folder with a SKILL.md file inside. That's it. Everything else — scripts, configs, reference docs — is optional.

my-skill/

├── SKILL.md # Required

├── scripts/ # Optional

│ └── helper.sh

└── references/ # Optional

└── notes.md

The folder name matters more than you'd think. Use lowercase with hyphens: calendar-summary, not CalendarSummary or calendar_summary. OpenClaw uses this name as the skill's identifier.

Project structure explained

I created mine in ~/.openclaw/skills/calendar-summary/. Workspace skills take precedence over bundled ones, which means you can test locally without breaking anything else.

The SKILL.md file has two parts: YAML frontmatter at the top, then markdown instructions below.

---

name: calendar-summary

description: Generate a formatted summary of today's calendar events

metadata:

openclaw:

emoji: "📅"

requires:

env: ["CALENDAR_API_KEY"]

bins: ["jq"]

---

# Calendar Summary

## What it does

Fetches today's calendar events and formats them as a plain text summary.

## Inputs needed

- Date (defaults to today)

- Calendar source (defaults to primary)

## Workflow

1. Fetch events from calendar API

2. Filter for today

3. Format as bullet list with times

4. Output to terminal or file

## Guardrails

- Do not expose API keys in output

- Handle empty calendars gracefully

Minimal "hello action" example

Before I built the real thing, I tested with the simplest possible skill:

---

name: hello-test

description: Confirm the skill is loading correctly

---

# Hello Test

When the user says "test skill," respond with "Hello from hello-test skill" and the current timestamp.

That's it. No scripts, no dependencies. Just a skill that proves OpenClaw is reading the file.

I restarted OpenClaw, typed "test skill," and it worked. Small win, but it confirmed the scaffolding was right before I added complexity.

Dependencies and package.json

Most skills don't need a package.json. If your skill is pure instructions, skip it entirely.

I only added one because I wanted to use a Node script to format the calendar data. In that case:

{

"name": "calendar-summary",

"version": "1.0.0",

"type": "module",

"dependencies": {

"date-fns": "^3.0.0"

}

}

OpenClaw doesn't auto-install dependencies. You run npm install yourself in the skill folder before enabling it.

Implement core functionality

Action handler structure

The instructions section is where the skill actually does something. This isn't code — it's prose that tells OpenClaw what to do when the skill is triggered.

I wrote mine like I was explaining the task to a coworker:

## Instructions

When the user asks for a calendar summary:

1. Call the calendar API with today's date

2. Parse the response for events with start times and titles

3. Format each event as: `HH:MM - Title (Duration)`

4. Group morning (before noon) and afternoon (after noon)

5. Return as plain text, not a table

Example output:

Morning:

09:00 - Team standup (30 min)

10:30 - Design review (60 min)

Afternoon:

14:00 - Client call (45 min)

The more specific the better. If I wanted times in 12-hour format, I had to say that. If I wanted canceled events excluded, I had to mention it.

Error handling patterns

This took me two iterations to get right. My first version didn't handle missing API keys or empty calendars, which meant OpenClaw would just... stop and say "I can't do that."

I added guardrails directly in the SKILL.md:

## Error handling

If CALENDAR_API_KEY is not set:

- Respond: "Calendar API key not configured. Set it in openclaw.json."

If the API returns an error:

- Log the error message

- Respond: "Couldn't fetch calendar. Check API permissions."

If no events are found:

- Respond: "No events scheduled for today."

Not elegant, but it works. And more importantly, I know what happened when something breaks.

Logging and observability

OpenClaw doesn't have built-in debug logs for skills. When something fails, you get a generic error that references internal OpenClaw code, not your skill.

What helped: I added a DEBUG=1 flag to my script and logged every step to ~/.openclaw/logs/skills.log. Then I could grep for my skill name and see exactly where it broke:

grep "calendar-summary" ~/.openclaw/logs/skills.log

Not pretty, but it worked better than guessing.

Local testing workflow

Unit testing approach

I didn't write unit tests for the SKILL.md itself — it's just markdown. But I did test the helper script separately:

cd ~/.openclaw/skills/calendar-summary/scripts

./format-events.js < test-input.json

This caught formatting bugs before I ever loaded the skill into OpenClaw.

Integration testing with OpenClaw

Here's what I learned the hard way: some changes hot-reload, others require a full restart. There's no documentation on which is which.

Changes that hot-reload (usually):

- Edits to SKILL.md instructions

- Updates to helper scripts

Changes that need a restart:

- Adding new dependencies

- Changing metadata fields

- Enabling/disabling the skill in

openclaw.json

I kept a terminal open with tail -f ~/.openclaw/logs/gateway.log while testing. When a skill loaded, I'd see a line like:

[info] Loaded skill: calendar-summary

If I didn't see that after editing SKILL.md, I knew I needed to restart.

Debugging techniques

When my skill wasn't showing up, I ran:

curl http://localhost:3000/api/skills

This listed all loaded skills. If mine wasn't there, I'd check:

- Is the folder in

~/.openclaw/skills/? - Is the skill enabled in

openclaw.json? - Are dependencies installed?

- Is the YAML frontmatter valid? (Indentation matters.)

Most of my issues were #4. YAML is unforgiving.

Documentation and user experience

README and usage examples

I didn't write a README initially. Big mistake.

When I came back to this skill three weeks later, I couldn't remember what environment variables it needed or how to trigger it. So I added a README.md:

# Calendar Summary Skill

Generates a formatted summary of today's calendar events.

## Setup

1. Get an API key from your calendar provider

2. Add to openclaw.json:

```json

"calendar-summary": {

"enabled": true,

"env": {

"CALENDAR_API_KEY": "your-key-here"

}

}

- Restart OpenClaw

Usage

Ask: "show me today's calendar" or "what's on my schedule"

Troubleshooting

- "API key not configured" → Check openclaw.json

- "No events found" → Confirm calendar has events for today

This saved me from re-reading the code every time I needed to reconfigure something.

## Package and publish

### Version numbering strategy

ClawHub uses semver. I started at 1.0.0 because the skill worked and I was using it daily.

If I fix a bug: 1.0.1

If I add a feature: 1.1.0

If I break compatibility: 2.0.0

Simple. No overthinking.

### Publishing to npm or repository

ClawHub is the registry for OpenClaw skills. Publishing is straightforward once you know the metadata quirks.

First, I had to fix my frontmatter. The field is `metadata.clawdbot`, not `metadata.openclaw` (learned this from a GitHub gist by someone who published six versions before figuring it out):

```yaml

metadata:

clawdbot:

emoji: "📅"

requires:

env: ["CALENDAR_API_KEY"]

files: ["scripts/*"]

homepage: "https://github.com/anna/calendar-summary"

Then I ran:

clawhub publish ~/.openclaw/skills/calendar-summary

It prompted for a changelog (I wrote "Initial release") and uploaded the skill. ClawHub scans for security issues automatically — mine passed because I wasn't doing anything risky.

Publishing unlocked something unexpected: other people started using it and reporting bugs I never hit. The first issue was someone whose calendar API returned events in a different format than mine. I added handling for that in 1.0.1.

I'm still using this skill every morning. It's not perfect — sometimes it hiccups on all-day events — but it saves me about three minutes of clicking through tabs. And because I built it myself, I can fix the rough edges when they bother me enough.

If you're thinking about building a skill, start small. Pick one annoying thing. Build the simplest version that works. Then decide if you want to polish it or move on.

If you like the idea of small automations but not the YAML, restarts, and dependency juggling, that friction is real. We built Macaron to let you generate and run practical AI mini-tools without wiring everything by hand. Try it here → https://macaron.im/