NanoClaw vs OpenClaw (2026):Why I Switched After 3 Weeks

Hey fellow AI tinkerers — if you've been running a personal AI assistant on your own machine, you've already made the call most people haven't. This one's for you.

I'm Hanks. I test automation tools and AI systems inside real workflows. Not demos. Real tasks that need to run at 9am Monday without me babysitting them.

Three weeks ago, I ripped OpenClaw out of my setup and replaced it with NanoClaw. This is what I found.

The Problem I Was Actually Trying to Solve

Let me start where this actually began — not with NanoClaw, not with OpenClaw.

It started with a recurring task that kept failing silently.

I had set up a scheduled job in OpenClaw: pull together a weekly briefing from a few sources, summarize it, send it to myself via Telegram. Simple enough. Except it kept dying. No error. No log. Just... nothing in my inbox on Monday morning.

I went digging. Took me two hours to even locate which of OpenClaw's 52+ modules was responsible. Then another hour to understand why a shared memory process was dropping the job under load.

That's when I asked myself the question I should have asked earlier:

"Do I actually understand what this software is doing to my machine?"

The honest answer was no.

What OpenClaw Actually Looks Like Under the Hood

I don't want to be unfair to OpenClaw. Peter Steinberger built something genuinely impressive. The feature breadth is real — 15+ channels, Gmail, Calendar, GitHub, Spotify, browser automation. Over 150,000 instances running worldwide. Andrej Karpathy praised it publicly. That's not nothing.

But here's what the architecture actually looks like:

- 52+ modules, 45+ dependencies

- Everything runs in one Node process with shared memory

- Security is handled at the application level — allowlists, pairing codes, permission checks in code

- The codebase runs into hundreds of thousands of lines

Application-level security means: if something goes wrong in one part of the system, it has access to everything else. There's no OS-level wall between your agent and your host machine.

For a casual experiment, fine. For something I'm letting run autonomously and touch my files, my calendar, my messages? That kept nagging at me.

Enter NanoClaw. And My First Reaction Was Skepticism.

I'll be honest — when I first saw NanoClaw described as "the secure alternative," I rolled my eyes a little. That's what every lighter fork says.

But the numbers stopped me:

- ~3,900 lines of code across 15 files

- One process

- Agents run in actual Linux containers (Docker on Linux, Apple Container on macOS) — not application sandboxes

- Built directly on Anthropic's Claude Agent SDK

- No configuration files

That last one is the weird part. No config files. At all.

My first question was: "Then how do you customize anything?"

The answer is: you talk to Claude Code. You want Telegram? Run /add-telegram. Claude reads a Skills document, installs the dependencies, modifies the source code, configures the bot token, and runs connectivity tests. You watch it happen.

I kept waiting for this to fail. It didn't.

The Real Test: Three Weeks of Daily Workflows

Here's what I actually ran through NanoClaw:

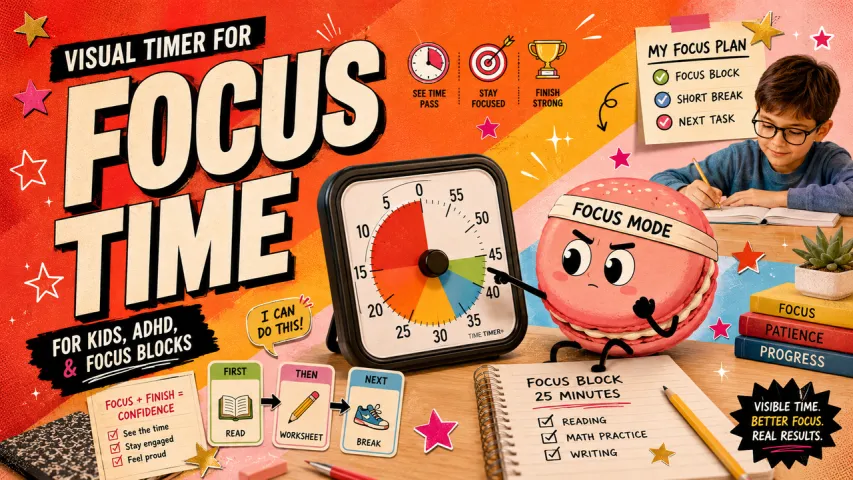

Week 1: Core setup + scheduled tasks

Setup took three commands:

git clone https://github.com/qwibitai/nanoclaw.git

cd nanoclaw

claude

Then /setup. Claude handled dependencies, authentication, container configuration, service setup. I didn't write a single config line.

First scheduled task I tried: daily morning briefing at 9am, pulling from two sources, formatted summary sent to WhatsApp. It ran. Every day. No babysitting.

Week 1 verdict: the thing that broke in OpenClaw just... worked.

Week 2: Stress-testing the Skills system

This is where I got suspicious. "Skills over features" sounds great in theory. In practice, I wanted to know: what happens when a skill breaks mid-installation?

I ran /add-telegram and deliberately interrupted it halfway through. Then asked Claude Code what happened. It told me exactly what had been installed, what hadn't, and offered to resume or roll back.

I asked it to add a custom trigger word. Plain English instruction. Done.

I asked it to store weekly conversation summaries to a file. Done.

Here's what caught me off guard: because the codebase is small enough to actually read, when something confused me I could just look at the source. I've never done that with OpenClaw. The codebase is too large to navigate without a map.

Week 3: Agent Swarms

NanoClaw is the first personal AI assistant to support Agent Swarms — teams of specialized agents that work together on complex tasks, each running in their own isolated container environment.

I set up two agents: one to monitor a folder for new documents and summarize them, another to handle scheduling-related messages. Each had its own memory file (CLAUDE.md), its own container, its own context.

Did it feel like science fiction? A little. Did it actually work inside a real repeating workflow? Yes — with caveats I'll get to.

Where NanoClaw Falls Short (Be Honest)

This section is the reason I'm writing this instead of just linking to the GitHub.

Multi-model support doesn't exist. NanoClaw runs on Claude via the Anthropic API. That's it. If you need OpenAI, local models, or want to swap providers — look elsewhere. nanobot from HKUDS handles this better, with support for OpenRouter, MiniMax, and others.

Channel coverage is limited out of the box. WhatsApp ships by default. Everything else is a Skill. If you need your agent simultaneously on WhatsApp + Discord + Slack without touching code, nanobot's 15,400-star project handles that more gracefully.

The Skills model requires Claude Code. If you don't have Claude Code in your workflow, the "customize by talking to Claude" pitch loses some of its magic. You're back to reading documentation.

Agent Swarms are early. They work. But coordinating multi-agent tasks across complex workflows still requires you to think carefully about what each agent can see and do. This isn't plug-and-play territory yet.

The Architectural Shift Worth Paying Attention To

NanoClaw's creator Gavriel Cohen describes it as "AI-native" software — a system designed to be managed and extended primarily through AI interaction rather than manual configuration. "If you want Telegram, rip out the WhatsApp and put in Telegram. Every person should have exactly the code they need to run their agent. It's not a Swiss Army knife; it's a secure harness that you customize by talking to Claude Code."

This is the thing I keep thinking about.

Every major AI assistant framework I've used has tried to be comprehensive — cover every platform, every use case, every user. The result is software that nobody fully understands, including the people maintaining it.

NanoClaw is betting on the opposite: make it small enough that you can read it. Make customization the model, not features. Let the AI extend the software rather than the developers.

Three weeks in, I think there's something real here. The scheduled tasks run. The containers isolate properly. The Skills system is genuinely usable. And when something breaks, I can actually find it.

The Broader Landscape (February 2026)

NanoClaw isn't the only project that emerged from OpenClaw's gravity. A few others worth knowing:

nanobot (HKUDS): Python-based, ~4,000 lines, 15,400 GitHub stars. Best multi-channel coverage — Telegram, Discord, WhatsApp, Slack, Feishu, DingTalk, Email, QQ. Launched February 2, 2026. If you need cross-platform reach, this is the one to look at.

ZeroClaw: Rust-based, 3.4MB binary, under 5MB RAM. For people who want minimal footprint above everything else.

IronClaw: The most hardened security model of any personal AI assistant I've found. WASM sandbox for tool execution, credential injection at the host boundary, per-tool rate limits. Significantly more complex to set up, but built for people who treat security as a first-class requirement.

OpenClaw itself is undergoing a transition: its creator Peter Steinberger announced in February 2026 that he's joining OpenAI to build agents for a mainstream audience. OpenClaw is moving to an independent foundation, with OpenAI as sponsor. What that means for the project's direction is still unclear.

System Insights (What Actually Transferred to My Workflow)

After three weeks, here's what I know:

Smaller is auditable, and auditable is trustworthy. Not because I read every line — I didn't. But because I could. That changes how I think about what the software is doing.

Skills over Features is a real architectural model. The instinct to add everything to the core is strong. NanoClaw resists it by design. Over time, your instance does exactly what you need and nothing else. That's a different kind of value.

Container isolation matters more than I thought. Application-level security feels fine until something goes sideways. OS-level isolation means a misbehaving agent affects only the container. For something running autonomously with access to files and messages, that's not a minor distinction.

"Configure by talking" only works if the base is small enough. This is the key insight. Claude Code can rewrite NanoClaw's source because the source is readable by a language model in a reasonable context window. It couldn't do that with OpenClaw's 430,000+ lines.

Should You Switch?

Switch to NanoClaw if:

- You're running scheduled tasks and autonomous workflows that need to actually stay running

- Security and auditability matter to you

- You're comfortable with Claude as your only model

- You want to customize through conversation rather than config files

Stay on OpenClaw (or try nanobot) if:

- You need multi-model support

- You need broad channel coverage without custom Skills work

- You're comfortable with complex systems and don't mind the weight

Don't touch any of these yet if:

- You want a polished consumer product

- You're not comfortable with a system that can touch your files and messages

A Note on Where This Is Going

Anthropic launched Claude Cowork in January 2026 — a first-party desktop agent built on the same Claude Agent SDK that powers NanoClaw. It supports MCP plugins for external services, parallel sub-agents, and shipped on both macOS and Windows. Bloomberg reported a $285 billion SaaS stock selloff triggered by its release.

The fact that Anthropic's own agent product and NanoClaw share the same SDK foundation isn't a coincidence. The "Claude Agent SDK as harness" model is becoming infrastructure.

Personal AI agents that run on your own machine, touch your own data, and operate on your own schedule — that's not a niche experiment anymore. It's becoming a workflow layer.

The question is which version of that layer you trust enough to actually run.

At Macaron, we've been watching the same friction up close: ideas and plans that live in conversations but never make it into actual, executable next steps. We built our agent around exactly that handoff — taking the context from a conversation and moving it into structured, repeatable workflows without losing the thread. If you want to test whether your own workflow can survive that translation, you can run a real task in Macaron and judge the output yourself. Low commitment, no lock-in.

Related reading:

- How I stress-tested three AI scheduling systems over 30 days

- nanobot vs NanoClaw: when you need more than one chat platform

- The case for reading your own agent's source code

Data sources:

- NanoClaw GitHub: github.com/qwibitai/nanoclaw

- VentureBeat coverage, February 23, 2026

- nanobot GitHub: github.com/HKUDS/nanobot

- OpenClaw/NanoClaw landscape analysis, jagans.substack.com, February 22, 2026