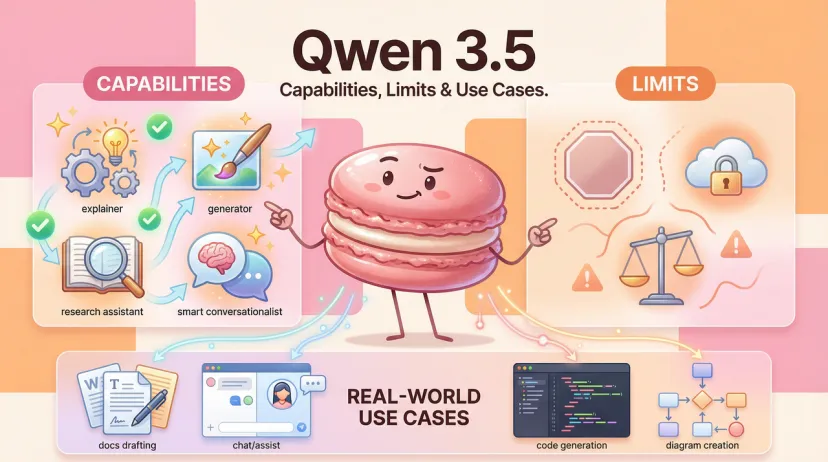

What Is Qwen 3.5? Capabilities, Limits & Real-World Use Cases (2026)

Hi everyone — if you've been searching for "Qwen 3.5" this week and getting a mix of speculation, placeholder pages, and half-formed reports, you're not imagining it. Things are genuinely in flux right now.

I'm Hanks. I test AI tools in real workflows, and I try not to publish until I have something solid to test. So let me be upfront about the situation as of February 12, 2026: Qwen 3.5 has not been officially released yet. But it's coming fast, and there's enough confirmed information — from Alibaba's own HuggingFace pull requests and the underlying architecture — to give you a grounded picture of what you're actually looking at.

This article covers what's confirmed, what's still pre-release, and — critically — what the current Qwen3 generation tells us about real-world capabilities and limits right now. If you're evaluating whether to build on Qwen or wait for 3.5, this is the piece that answers that.

Qwen 3.5 in One Minute (What It Is + Who It's For)

Qwen 3.5 adopts a completely new hybrid attention mechanism and is highly likely to be a VLM-class model natively capable of visual understanding. Based on pull requests that surfaced on HuggingFace on approximately February 9, 2026, Qwen-3.5 will include two models — one at 9 billion parameters and the other at 35 billion parameters — with native multimodal support for the first time. The two models will also feature the company's next-generation architecture, which was first previewed in September in an experimental model called Qwen3-Next.

That's the headline. Here's what that means practically:

The 9B model is sized for consumer hardware and local deployment. The 35B-A3B (MoE, 35B total / ~3B active) is the efficiency play — a model that activates a small fraction of its parameters per inference, meaning you get significantly more capability than the active parameter count implies. This matches the pattern Alibaba established with Qwen3's MoE variants, where Qwen3-30B-A3B can run on a single high-end consumer GPU and showed performance comparable to much larger, closed-source models on tasks like coding.

Who Qwen 3.5 is for:

- Developers who want capable open-weight models they can self-host

- Teams that need multilingual output beyond English-Chinese (more on the 119-language coverage below)

- Anyone building agentic workflows who wants MCP-native tooling

- Local deployment use cases where API costs matter

Who it's not for right now: anyone who needs to ship production features today. Qwen 3.5 is not yet released as of this writing. The current answer for production workloads is Qwen3.

What's New vs Qwen3

Let me be precise about what we're comparing here. The current released generation is Qwen3, which Alibaba launched on April 29, 2025, including dense models (0.6B, 1.7B, 4B, 8B, 14B, and 32B parameters) and MoE models (30B with 3B active, and 235B with 22B active), all open-sourced and available globally.

The most significant architectural shift in Qwen 3.5 is the move to the Qwen3-Next architecture. According to Wikipedia's Qwen page (updated this week): Qwen3-Next introduces several key improvements over the Qwen3 architecture: a hybrid attention mechanism, a highly sparse mixture-of-experts (MoE) structure, training-stability-friendly optimizations, and a multi-token prediction mechanism for faster inference. Based on the Qwen3-Next architecture, a model with 80B total parameters and 3B active parameters was created. The Qwen3-Next model performs comparable to, or in some cases better than, Qwen3-32B while using less than 10% of its training cost in GPU hours. In inference, especially with contexts greater than 32K tokens, it reaches greater than 10x higher throughput.

The other major change is native multimodal support — something Qwen3 required separate VL (vision-language) model variants to achieve. Qwen 3.5's integration of visual understanding directly into the base model simplifies deployment considerably.

Core Capabilities

Since Qwen 3.5 isn't testable yet, the honest move is to characterize capabilities from the Qwen3 foundation it's built on — because Qwen 3.5 is an architectural refinement of Qwen3, not a ground-up rebuild.

Reasoning. Qwen3 introduced the hybrid thinking/non-thinking mode toggle. In thinking mode, the model runs step-by-step chain-of-thought reasoning — good for math, code, and structured logic. In non-thinking mode, it responds quickly for general dialogue. Qwen3 significantly enhanced reasoning capabilities, surpassing previous QwQ in thinking mode and Qwen2.5 instruct models in non-thinking mode on mathematics, code generation, and commonsense logical reasoning. Qwen 3.5 inherits this mode structure.

Coding. This is a genuine strength in the Qwen family. Qwen3-Coder-Next achieves 32.4% pass@5 on fresh GitHub PRs, matching GPT-5-High. The base Qwen3 models also perform well on coding tasks, particularly Python and structured data manipulation.

Multilingual. Qwen3 supports 119 languages and dialects, with leading performance in translation and multilingual instruction-following. This is a practical differentiator — not marketing copy. Most frontier models optimized for English show noticeable quality drops in non-English languages. Qwen3's multilingual coverage is genuinely broader.

Agentic tool use. Qwen3 natively supports the Model Context Protocol (MCP) and robust function-calling, leading open-source models in complex agent-based tasks. If you're building workflows where the model needs to call external tools, query databases, or orchestrate sub-tasks, MCP support is meaningful.

Context window. Current Qwen3 large models support 128K tokens, extendable to 1 million tokens in the updated July 2025 variants. Qwen 3.5's context specs aren't confirmed yet.

Where Qwen3 (and Likely Qwen 3.5) Performs Best

These are the scenarios where I've seen Qwen-class models hold up well in real tasks — not benchmarks, actual workflows.

Writing and content generation. Qwen3 produces clean, coherent long-form output across languages. The July 2025 updates specifically noted "markedly better alignment with user preferences in subjective and open-ended tasks." In practice, this shows up as output that feels less formulaic than earlier Qwen generations — responses that actually adjust tone and structure to the request rather than defaulting to generic article formatting.

Coding assistance (mid-complexity tasks). SQL generation, Python scripting, API integration, and boilerplate — Qwen3 handles these reliably. Above a certain complexity ceiling (multi-file refactoring, architecture decisions), the specialized Qwen3-Coder variants outperform the base models significantly.

Multilingual document work. Translation, summarization, and cross-language analysis. I've run Qwen3-14B through Japanese and Korean documents and gotten accurate output without the garbled phrasing you get from English-first models attempting non-English work.

Local deployment with constrained hardware. The MoE models in particular — Qwen3-30B-A3B runs comfortably on a single 80GB A100 or two 40GB cards with vLLM and FlashAttention. The 8B and 14B dense models run on consumer GPUs. If you're self-hosting, this is a real constraint that Qwen respects better than most frontier model families.

Known Limits & Failure Patterns

These are actual problems I've hit with Qwen3-class models, which will likely carry forward into 3.5 unless specifically addressed.

Hallucinations on recent events. Like all models, Qwen3 has a knowledge cutoff. It will confidently generate plausible-sounding but incorrect information about events, product versions, and organizational details that postdate its training. Don't use it without retrieval grounding for anything time-sensitive.

Context drift in very long sessions. Past roughly 40–50K tokens of active context, instruction-following quality starts to degrade in my testing. The model technically supports 128K, but adherence to earlier instructions weakens as the conversation grows. For long-document work, chunked summarization with re-injection of key constraints outperforms single-pass long context in practice.

Brittle specification handling. When given complex multi-constraint tasks — "write a function that does X, in style Y, using library Z, with these specific edge case behaviors" — Qwen3 will sometimes satisfy 3 of 4 constraints while silently dropping the fourth. This is more pronounced with 8B and 14B models than larger variants. The fix is structured output prompting and explicit constraint checklists, but it requires knowing to do it.

Reasoning mode overhead. Thinking mode produces better outputs for hard problems but significantly increases latency and token consumption. For production deployments where speed matters, you need a strategy for when to enable it — not just "always on."

Who Should Use It (and Who Shouldn't)

Use Qwen3 now (and likely Qwen 3.5 when it ships) if:

- You need open-weight models under Apache 2.0 for commercial use

- You're deploying multilingual applications beyond English

- You want MCP-native agentic tool use without custom integration work

- You're running local inference on consumer or prosumer hardware

- Cost matters — Qwen2.5-72B matches GPT-4 on many benchmarks, and the pricing through Alibaba Cloud is significantly below comparable closed-source API rates

Wait for Qwen 3.5 specifically if:

- You need native multimodal input — image understanding built into the base model, not a separate VL variant

- You're building workflows where the Qwen3-Next architecture's 10x throughput improvement on long contexts matters

- You're targeting the 9B or 35B parameter size range specifically

Use something else if:

- You need the absolute state-of-the-art on English-language reasoning benchmarks today — Qwen3 is competitive but not the leader in that narrow category

- Your deployment requires an enterprise SLA with Western data residency guarantees — Alibaba Cloud's infrastructure has significant China-region dependencies

- You're not comfortable with a Chinese corporate entity's data handling, regardless of the Apache license on the weights

At Macaron, we see this pattern constantly: someone finds a model that fits their task, runs it in a one-off test, gets a good result, and then has no system for tracking what prompt configuration, model version, or temperature setting produced that result. Three days later they're rebuilding from scratch. We built Macaron to hold that context across sessions, if you're evaluating these models for an actual workflow, try running your test inside Macaron at macaron.im. Low commitment, no lock-in, and the results speak for themselves.