GPT-5.4 Pricing: Plans, API Costs & Is It Worth It?

Hey friends — if you've been staring at OpenAI's pricing page trying to figure out whether GPT-5.4 is going to wreck your API budget, this is the breakdown you actually need.

I've been running the numbers since launch day. And here's the thing that surprised me: GPT-5.4 costs more per token than GPT-5.2, but it might genuinely cost you less to run the same workflow. That's not a marketing line — there's real math behind it. Let me walk through it.

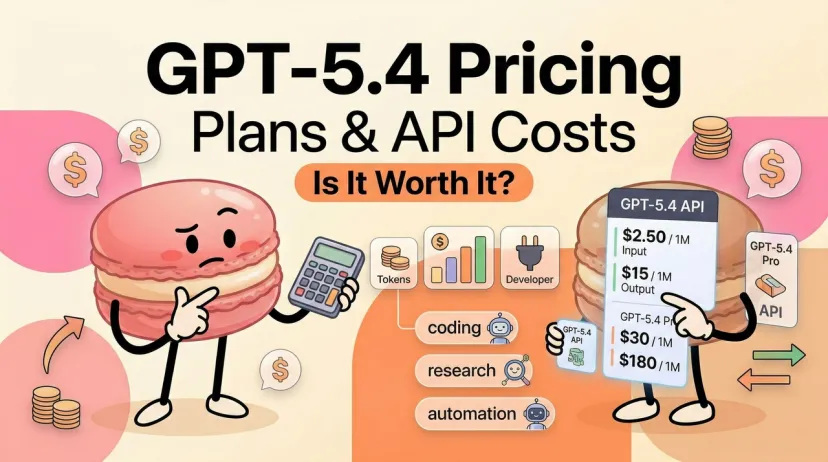

GPT-5.4 Pricing at a Glance

ChatGPT Plan Access

GPT-5.4 Thinking is available to ChatGPT Plus, Team, and Pro users, replacing GPT-5.2 Thinking as the default reasoning model in ChatGPT. Here's how access breaks down across plans as of March 2026:

GPT-5.4 Pro is available to Pro and Enterprise plans only. GPT-5.2 Thinking will remain available in Legacy Models for three months before being retired on June 5, 2026.

API Pricing Table

All prices are per 1 million tokens, as listed on the OpenAI API pricing page.

For models with the 1.05M context window, prompts with more than 272K input tokens are priced at 2× input and 1.5× output for the full session.

GPT-5.4 vs GPT-5.2: Is It Really More Expensive?

Per-Token Price Increase

Yes, GPT-5.4 is more expensive per token than GPT-5.2. GPT-5.4 input is $2.50 versus $1.75 for GPT-5.2, and output is $15.00 versus $14.00 per million tokens. On raw token price alone, you're paying more.

But raw token price is the wrong comparison.

Why Total Cost Might Not Rise: Tool Search

Here's the part most pricing comparisons skip. GPT-5.4 introduces Tool Search — a mechanism where the model dynamically loads only the tool definitions it actually needs, rather than dumping your entire tool catalog into the prompt at the start of every request.

According to OpenAI's tool search docs, tool search lets the model dynamically search for and load tools into the context only when needed, reducing token usage, preserving the model cache better, and avoiding dumping a huge tool catalog into the prompt upfront.

OpenAI's docs make clear that enabling tool search by configuring MCP servers behind the tool search layer — rather than loading all definitions upfront — can cut token costs by nearly half.

For any agent workflow with 10+ tools, this isn't trivial. Loading 50 tool JSON schemas into every request at $2.50/M input adds up fast. Tool Search eliminates that overhead entirely for tools the model doesn't use on a given turn.

The Math: Same Task, Different Token Spend

Let's run a real-world comparison. Suppose you have an enterprise agent with 40 tools, and the average request uses 3 of them. With GPT-5.2 (full tool loading), each request includes all 40 schema definitions — call it 6,000 tokens of tool overhead per request. With GPT-5.4 + Tool Search, that overhead drops to roughly the tool index (lightweight) plus the 3 definitions actually needed — call it ~800 tokens.

The per-token price went up. The per-task cost went down. That's the counterintuitive reality of GPT-5.4 pricing for agentic workloads.

OpenAI has said GPT-5.4 uses fewer tokens to reach the same conclusions, making it the most token-efficient reasoning model yet in terms of both speed and cost.

GPT-5.4 Pro: Who Actually Needs It?

What Pro Adds Over Standard

GPT-5.4 Pro is available in the Responses API only, to enable support for multi-turn model interactions before responding to API requests, and other advanced API features in the future. Since GPT-5.4 Pro is designed to tackle tough problems, some requests may take several minutes to finish.

In ChatGPT, Pro activates the highest reasoning depth — the model will think longer, go further, and produce more thorough outputs on genuinely hard tasks. It also unlocks GPT-5.4's strongest BrowseComp performance (89.3% vs 82.7% for standard).

Key differences from standard:

- Maximum reasoning depth (xhigh effort, extended thinking time)

- Multi-turn interaction loop in the Responses API before final output

- No structured outputs support (standard GPT-5.4 does support structured outputs)

- Can take several minutes per complex request — design for async/background mode

Price Reality

GPT-5.4 Pro is priced at $30 per million input tokens and $180 per million output tokens. That's a 12× jump over standard on input, and a 12× jump on output. GPT-5.4 Pro at $30/$180 per million tokens makes it 12× more expensive than the base model and 15× more expensive than Gemini on output.

Use Cases That Justify Pro Pricing

Be honest with yourself here. Pro pricing only makes sense when:

- The task takes hours, not seconds — long-horizon legal, financial, or research work where a single high-quality output saves multiple hours of human review

- You need the highest BrowseComp performance for deep web research chains

- You're building enterprise agents on multi-turn Responses API workflows where reasoning depth directly affects output quality

- The cost of a wrong answer exceeds the cost of the API call by a significant multiple

For most developers and teams, standard GPT-5.4 at $2.50/$15 is the right starting point. Try Pro on specific high-stakes tasks, not as your default model.

Free Access: What You Get Without Paying

ChatGPT Free Tier

Free tier users can use GPT-5.2 only a limited number of times within a five-hour window. GPT-5.4 Thinking is not available on the free tier at all. The free tier runs on GPT-5.2 Instant with hard rate limits, auto-downgrading to a mini model when you hit them.

What free tier gives you in March 2026:

- GPT-5.2 Instant (not GPT-5.4)

- ~10 messages per 5-hour window before throttle

- No model picker — no manual model selection

- Basic tools (search, some image generation) with stricter rate limits than paid tiers

What's Locked Behind Paid Plans

For a limited time, Codex is included with ChatGPT Free and Go, with doubled rate limits on Plus, Pro, Business, Enterprise, and Edu plans.

Hidden Costs & Gotchas

GPT-5.2 Retirement Timeline = Forced Migration Cost

This one catches teams by surprise. GPT-5.2 Thinking will remain available for three months after GPT-5.4 Thinking launches, then retires on June 5, 2026. If your production workflows are currently pinned to GPT-5.2 Thinking, you have until June to migrate or your pipelines break.

The migration cost isn't just token price — it's prompt re-testing, evaluation runs, and output format validation. GPT-5.4 produces more streamlined outputs with cleaner formatting, which sounds good until your downstream parser breaks because the response structure changed. Budget time for this, not just money.

The 272K Context Cliff

Once your prompt history or document upload crosses the 272K token mark, the input token rate doubles to $5.00 per million. On a large codebase analysis or a lengthy legal document, it's easy to drift past this threshold without noticing.

Practical mitigation: track prompt length per request in your logging, set alerts at 200K tokens, and use prompt caching aggressively. Cached input drops to $0.25 per million tokens — a 90% reduction — which is your best defense against runaway context costs.

Pro Version Latency at Scale

GPT-5.4 Pro is not a drop-in replacement for standard in real-time user-facing applications. Some Pro requests may take several minutes to finish — OpenAI recommends using background mode to avoid timeouts. For synchronous request/response architectures, this is a blocking problem. Async queue patterns are required.

Regional Processing Surcharge

Easy to miss: beginning with GPT-5.4, requests made to Data Residency and Regional Processing endpoints are charged an additional 10% on top of all other applicable pricing. If you're routing through EU or APAC data residency endpoints for compliance, that's a real cost line to add to your budget.

Verdict: When Is GPT-5.4 Worth Paying For?

Here's the decision framework I actually use:

If your monthly API spend is under ~$50: Stick with GPT-5.2 for now and evaluate GPT-5.4 on a test project. The token price increase matters more at low volume, and Tool Search benefits require agentic workflows to realize savings.

If you're between ~$50–$500/month: GPT-5.4 standard is likely worth it, especially if you run any agent workflows with multiple tools. Run your current workflow through GPT-5.4 with Tool Search enabled for two weeks and compare actual costs, not per-token rates.

If you're above ~$500/month on agentic tasks: GPT-5.4 standard with Tool Search is almost certainly cheaper than GPT-5.2 at scale. The tool overhead savings compound across volume.

GPT-5.4 Pro: Only if you have specific high-value tasks where reasoning depth directly translates to dollar-denominated output quality (legal analysis, financial modeling, research synthesis). Don't use it as your default. Try it on exactly the tasks where GPT-5.4 standard falls short.

The bottom line: GPT-5.4 is not "just more expensive." For the right workloads — agentic, multi-tool, professional knowledge work — the efficiency gains make it the more cost-effective choice even at a higher token rate. For simple, low-volume, chat-style use cases, the upgrade math doesn't work.

At Macaron, we've built our agent to handle exactly the kind of multi-step, tool-heavy workflows where GPT-5.4's efficiency gains matter most — translating conversations into structured, trackable tasks without losing context across steps. If you want to test how your workflow holds up without worrying about runaway token costs, try running a real project and see what it actually costs to get to done.

Related Articles