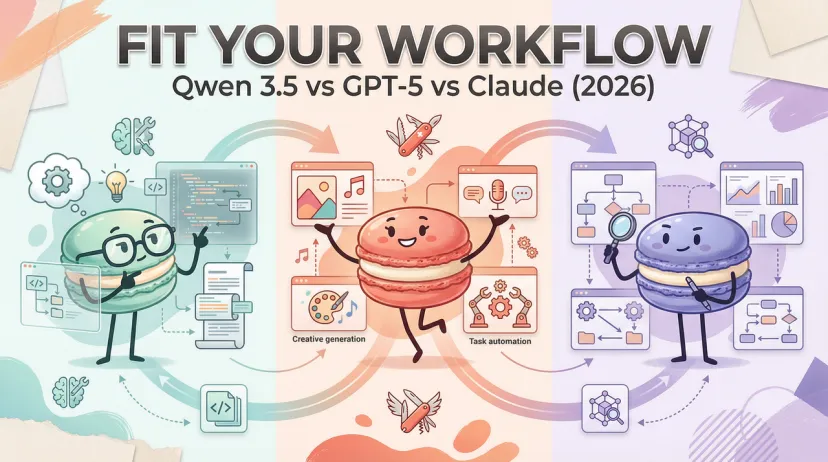

Qwen 3.5 vs GPT-5 vs Claude (2026): Which Model Fits Your Workflow?

Hey everyone — if you've spent more than ten minutes this week trying to figure out which model to actually use, you're not alone. I've been running the same mental debate, and it's made worse by the fact that one of the three models in this article technically hasn't dropped yet.

I'm Hanks. I test AI tools in real workflows. Here's my honest status check as of February 12, 2026: GPT-5.2 is live, Claude Sonnet 4.5 is live, and Qwen 3.5 is confirmed incoming but not yet released. There are HuggingFace pull requests from February 9th that make it clear Qwen 3.5 is imminent — but anyone telling you they've run head-to-head benchmarks on it right now is either lying or working at Alibaba.

What I can do is give you a grounded picture of where each model actually sits, what the confirmed Qwen 3.5 architecture tells us about how it'll compete, and the decision logic for each scenario. That's the article.

What We're Comparing (The 4 Decision Dimensions)

I'm not building a benchmark table for its own sake. When someone asks "which model should I use," they're almost always asking one of four real questions:

- Reasoning quality: Can it handle my hard problems without melting down?

- Coding reliability: Will it produce working code on complex, multi-file tasks?

- Cost and access: What does this actually cost to run at my scale, and where can I deploy it?

- Workflow fit: Does it integrate with the tools I'm already using?

Everything below maps to one of those four questions. I'm not going to rank models on philosophy — I'm going to tell you which one to use for which task.

Reasoning & Complex Tasks

This is where the three models diverge most clearly, and where the "Qwen 3.5 is unreleased" caveat matters least — because the architecture tells us the story.

GPT-5.2 (current version, released December 2025) runs a real-time router that automatically selects between a fast main model and a deeper reasoning variant depending on task complexity. According to OpenAI's official release documentation, GPT-5 achieves 94.6% on AIME 2025 without tools and 88.4% on GPQA with extended reasoning — and GPT-5.2 with thinking mode is roughly 80% less token-intensive than o3 for equivalent reasoning quality. That's the headline: frontier reasoning at meaningfully lower compute cost than its predecessors.

In my actual testing with GPT-5.2, the thinking mode is genuinely useful for hard problems — but it requires you to say "think hard about this" or equivalent to reliably trigger it. Without that signal, the router sometimes defaults to the fast model on tasks that would benefit from chain-of-thought.

Claude Sonnet 4.5 (released September 29, 2025) scores 83.4% on GPQA Diamond and 100% on AIME with Python tool use. The extended thinking mode (available across the Claude 4.5 family) is explicit and opt-in, which means you have cleaner control over when you're paying for reasoning tokens versus fast responses. On subjective reasoning tasks — document analysis, nuanced argumentation, anything requiring judgment under ambiguity — I find Claude more consistently reliable than GPT-5 in the same tier. There's less variance between runs.

Qwen 3.5 inherits the hybrid thinking/non-thinking toggle from Qwen3, which already demonstrated competitive reasoning on math and structured logic tasks. The Qwen3-Next architecture it's built on — with hybrid attention and multi-token prediction — is specifically optimized for inference speed on long-context reasoning, where it reportedly delivers over 10x throughput versus Qwen3-32B. The architecture is genuinely interesting. But until I can test it myself, that's what it is: interesting.

For hard reasoning tasks right now: Claude Sonnet 4.5 for consistency, GPT-5.2 Pro for absolute ceiling. Watch Qwen 3.5 closely.

Coding & Agent Workflows

Coding is the area where the benchmarks are most interpretable and where real-world task performance tracks closest to numbers. Here's the current picture.

SWE-bench Verified (measures real GitHub issue resolution) is the cleanest proxy for production coding quality:

Claude Sonnet 4.5's 77.2% on SWE-bench is currently the highest among mid-tier models. The Anthropic release announcement describes a drop from 9% to 0% error rate on internal code editing benchmarks — I've verified this in practice on Python refactoring tasks. It's the model I'd choose for multi-file codebase work right now.

For multi-file editing and debugging, Claude Sonnet 4.5 with Claude Code (the terminal-native agent) is the most complete package. The checkpoint rollback feature added at release means you can let it run autonomously and recover cleanly if it breaks something — which it will, eventually. The 30+ hour sustained task capability is real; I've run 4–6 hour agent sessions without context collapse.

GPT-5.2 is competitive and better integrated into the Microsoft ecosystem — if you're already in GitHub Copilot, the integration is smoother. But for standalone agentic coding, Claude's tooling is currently ahead.

Qwen3-Coder-Next (the current coding-optimized variant) is an open-weight model that matches GPT-5-High on fresh GitHub PRs in pass@5 testing. If you're self-hosting and cost is a real constraint, this is the number that matters. Qwen 3.5's coding capabilities aren't confirmed yet, but given the architecture improvements in throughput and efficiency, the 35B-A3B model in particular will be worth evaluating for local deployment coding workflows.

For coding workflows right now: Claude Sonnet 4.5 for quality + agentic reliability, GPT-5.2 for Microsoft ecosystem integration, Qwen3 for self-hosted/cost-constrained deployments.

Cost, Access & Ecosystem

This is where the three models diverge most sharply — and where the decision is clearest for each use case.

Claude Sonnet 4.5 API pricing: $3 input / $15 output per million tokens. Prompt caching drops effective input cost to as low as $0.30/M tokens at 90% cache hit rate. Batch API gives 50% off. Available on Anthropic's API, AWS Bedrock, Google Vertex AI, and Microsoft Foundry. Data residency: Claude's infrastructure is Western-hosted with regional endpoint options.

GPT-5.2 pricing through OpenAI API: gpt-5-turbo tier pricing (exact current rates require checking OpenAI's pricing page directly — they've updated these multiple times since August 2025). Consumer access is free in ChatGPT with rate limits; Plus ($20/month) for priority; Pro for unlimited GPT-5 Pro. The Microsoft Copilot integration means GPT-5.2 is effectively embedded in tools where you're already paying for Microsoft 365 — making the marginal cost for many enterprise users essentially zero.

Qwen3 (current, until 3.5 ships): free to use via open weights under Apache 2.0. API access via Alibaba Cloud is significantly cheaper than equivalent Western frontier API pricing. The 30B-A3B MoE model runs on a single 80GB A100 or two 40GB cards with vLLM. The constraint: data flows through Alibaba Cloud infrastructure with significant China-region dependencies. For teams with compliance requirements around data residency, this matters.

Which Model to Choose by Scenario

Best for Solo Creators & Independent Developers

Default recommendation: Claude Sonnet 4.5

If you're building agents, writing code, or doing knowledge work — and you want the best combination of quality, reliability, and cost at moderate volume — Claude Sonnet 4.5 is the answer right now. The $3/$15 pricing is predictable, the extended thinking mode gives you genuine control over cost vs. quality per request, and the Claude Code toolchain is mature enough to handle complex autonomous tasks without babysitting.

The one exception: if you're already deep in the ChatGPT interface and rely on its memory and conversation features, GPT-5.2 has meaningfully improved and the switching cost may not be worth it.

Watch Qwen 3.5 when it ships. The 9B model in particular — if it performs close to Qwen3-14B on coding and reasoning tasks while running on consumer hardware — will be the most compelling self-hosted option for indie developers who want to avoid API costs entirely.

Best for Teams & Agent Workflows

For pure agent performance: Claude Sonnet 4.5 (or Opus 4.5 for maximum ceiling)

The combination of 77.2% SWE-bench, 30+ hour sustained task execution, checkpoint rollback, the Claude Agent SDK, and availability across every major cloud platform makes this the most complete agentic stack available today. Devin reports an 18% improvement in planning performance with Sonnet 4.5. GitHub Copilot integration is live. The security agent case study (44% reduction in vulnerability intake time) gives you a real-world baseline for evaluating fit.

For Microsoft-native teams: GPT-5.2

If your team is running on Azure, Microsoft 365, and GitHub, the GPT-5.2 integration path is lower friction. You're not losing much on raw capability at this tier — the gap between GPT-5.2 and Claude Sonnet 4.5 on most production tasks is narrower than the benchmark gap implies.

For multilingual, self-hosted, or cost-sensitive deployments: Qwen3 now, Qwen 3.5 when available

Qwen3's 119-language coverage is not marketing. If you're building for non-English markets, this is a genuine differentiator. The MoE architecture means real deployment cost efficiency at scale. Apache 2.0 means no vendor lock-in.

At Macaron, we think about this problem differently: the model question matters, but the system around the model matters more. Which model produced that good result? What temperature, what prompt structure, what context length? When you're running agent workflows across multiple models and trying to figure out which configuration to standardize on, that decision-making context disappears fast. We built Macaron to hold that context — so your workflow decisions have memory, and your experiments build on each other instead of starting from zero. If you're doing serious model evaluation, try running your comparison tasks inside Macaron at macaron.im. Low commitment, no lock-in — judge the results yourself.