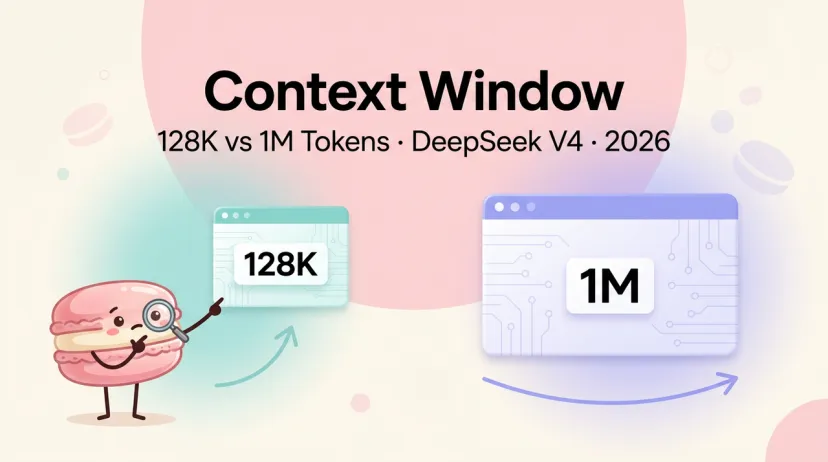

DeepSeek V4 Context Window: 128K vs 1M Tokens

Hey fellow context-window obsessives — I'm Hanks, and I've spent the last few months stress-testing long-context workflows across every major model that claims to "handle your entire codebase." If you're the type who's ever watched a model confidently hallucinate a function that was sitting right there at token 80K, you're in the right place.

The question I keep asking myself isn't "what's the token limit?" It's: can this model actually use those tokens when it matters?

DeepSeek's jump from V3's 128K to V4's reported 1M is the biggest context leap we've seen from a single model family in a while. I want to break down what's real, what's still speculation, and how it stacks up against competitors you're probably already using.

Context Window: V3 128K vs V4 1M

Let's start with what's confirmed vs. what's inferred.

DeepSeek V3 ships with a 128K token context window validated through the official technical report. The architecture uses Multi-head Latent Attention (MLA) and two-stage context extension with YaRN to reach that ceiling. Needle-in-haystack (NIAH) tests show it holds up well across the full 128K range — no dramatic degradation at the tail end.

DeepSeek V4 is a different story. As of February 11, 2026, DeepSeek silently pushed an update expanding the context window to 1M tokens and updating the knowledge cutoff to May 2025. Whether this was a staged V4 preview or a V3 upgrade is still officially unconfirmed — the company said nothing. But developers noticed, and the change tracked with research papers on Engram (conditional memory) and DeepSeek Sparse Attention (DSA) that DeepSeek published in January 2026.

The 1M figure isn't arbitrary. DSA reduces attention complexity from O(L²) to O(kL) — meaning longer contexts don't proportionally explode compute. That's how 1M tokens becomes achievable without melting a data center.

Still, here's my honest take: the February update could be a real V4 preview, or it could be a V3.x incremental push. As of early March 2026, V4 hasn't officially launched. I'm treating the 1M context as likely real but not yet independently stress-tested at scale.

Competitor Comparison Table

Here's where the major models sit right now:

GPT-4o 128K

GPT-4o's 128K context is well-tested and reliable. The ceiling is real — you won't get the "lost in the middle" surprises that sometimes hit models claiming bigger windows. Pricing at $2.50/M input is significantly higher than DeepSeek.

Claude 200K

Claude 3.5 Sonnet's 200K context window is production-grade and has been widely validated. There's a 1M beta available via API header (context-1m-2025-08-07), but it's still flagged as experimental. At $3/M input tokens, Claude is much more expensive than DeepSeek at equivalent token counts.

Gemini 1M

Gemini markets up to 1-2M tokens depending on the version, but there's a gap between advertised and usable context. The "lost in the middle" effect is more pronounced at the extremes. Strong for rapid prototyping, but retrieval quality drops noticeably past ~70% capacity in independent tests.

What 1M Tokens Means in Practice

Okay, this is where I always stop and ask: does the task actually need this?

Codebase Ingestion

1M tokens is roughly 750,000 words, or about 40-60MB of source code depending on language verbosity. That covers most production-scale monorepos — a medium Node.js or Python codebase with docs, tests, and configs.

The real value isn't just "it fits." It's that you skip the chunking step. Most multi-file workflows today require you to break the repo into segments, feed them sequentially, then reconstruct the model's understanding across passes. With 1M context, that fragmentation problem disappears — in theory.

In practice? The model still needs to attend to the right parts. DSA helps with that by dynamically selecting high-value tokens rather than attending uniformly. But codebase ingestion at 1M hasn't been independently benchmarked post-launch yet.

A rough token-count cheat sheet:

~1K tokens → short function + docstring

~10K tokens → medium-size module (~500 lines)

~100K tokens → small project or feature branch

~500K tokens → production monorepo (medium complexity)

~1M tokens → large repo with docs, tests, CI configs

Multi-Document Analysis

Legal, research, and compliance workflows are the other obvious use case. Feeding 50+ documents at once for cross-reference, contradiction detection, or summary synthesis — that's where 1M tokens genuinely changes the workflow, not just the token count.

The honest caveat: long documents often have dense, redundant information near the middle. Models trained on this distribution can learn to skim rather than read. The Engram conditional memory architecture is supposed to address this, but I'd want to see third-party NIAH tests across the full 1M range before I trust it for anything critical.

Performance at Scale (Needle-in-Haystack)

V3's NIAH results are clean and verified in the official technical paper. Near-perfect retrieval accuracy across the full 128K window. That's genuinely impressive for an open-source MoE model.

For V4's 1M range — we don't have independent data yet.

What I've seen from independent benchmarking of V3 at long context reveals something worth noting: there's a U-shaped accuracy curve in practice. Information at ~50% depth showed the worst retrieval accuracy (~40.5%) in controlled tests, while content at the beginning and end performed much better. This "lost in the middle" behavior is common across models — it's not a DeepSeek-specific flaw, but it's real.

One mitigation that worked well in practice: force the model to generate a short analysis of the target content before the main task. That single prompt change pushed mid-depth accuracy from 40.5% to 51.8% in one study. Not a silver bullet, but worth knowing.

Until V4's 1M window gets the same rigorous treatment, treat the theoretical ceiling with appropriate skepticism.

Pricing at High Token Counts

This is where the cost story gets interesting — and a bit tricky.

DeepSeek's current API pricing for V3.2 is $0.028/M input (cache hit), $0.28/M input (cache miss), and $0.42/M output. For V4, pricing hasn't been announced.

But let's do the math on what 1M-token workflows actually cost at current V3.2 rates:

Compare that to Claude Sonnet 4.5 at $3/M input — the same 1M-token pass would cost ~$3.00+. DeepSeek stays roughly 10x cheaper at high token counts.

The catch: if V4 introduces pricing changes for the extended 1M window, the math shifts. Watch the DeepSeek API docs for any model-specific surcharge at high token counts.

Cache hit strategy matters here. If your prompt includes a long, repeated system context (e.g., a full codebase that doesn't change between runs), the $0.028 cache-hit rate makes repeated queries extremely cheap. Building your workflow around this can cut costs 90% compared to naive prompting.

Best Practices

A few things that actually survive contact with real workflows:

- Don't default to maximum context. More tokens = more latency and more cost. If your task only needs 50K tokens, don't pad it to 1M hoping for better results. V3's NIAH data confirms performance is excellent well within 128K — there's no benefit to artificially stretching context.

- Put critical instructions at the beginning or end. The U-shaped accuracy curve is real. Don't bury your most important instruction at the 50% mark in a long prompt. Structure your prompt so key directives appear at the top, and reference them again at the bottom if needed.

- Use cache-hit pricing intentionally. If you're running repeated analysis on the same document set, keep the document chunk in a fixed prefix position. This maximizes cache hit rates and keeps costs low.

- Wait for V4 NIAH benchmarks before committing. For production workflows at the 1M scale, I'd want third-party validation before trusting the model with multi-million-dollar contracts or mission-critical code. Independent benchmarks typically arrive within 1-2 weeks of launch.

- Consider the "cheap model + verifier" cost trap. Some teams use DeepSeek for bulk processing then verify with a higher-cost model. One analysis found this hybrid setup can cost 15% more than just using a premium model for the full task on medium-complexity work. Run the numbers for your specific workload before assuming the cheap-model path is always cheaper.

At Macaron, the workflows we've seen struggle most aren't the ones with too little context — they're the ones where a 500K-token codebase gets loaded, the model gives a confident answer, and nobody verifies whether it actually attended to the right files. If you're building on top of long-context models and want to test how a specific task holds up in a structured workflow without burning through API budget, try running a real task through Macaron — the memory layer persists between passes so you're not re-feeding the same context from scratch every time.

FAQ

Q: Is DeepSeek V4 available right now (March 2026)? As of early March 2026, V4 has not officially launched. The February 11 context update is widely interpreted as a V4 preview, but DeepSeek has not confirmed this. Current API access uses V3.2 with 128K context.

Q: What's the actual token limit of the current DeepSeek API? The deepseek-chat and deepseek-reasoner API endpoints both use V3.2 with a 128K context limit as of March 2026, which differs from the app/web version that received the 1M update.

Q: Does 1M context mean the model actually uses all those tokens reliably? Not necessarily. Advertised context windows often exceed reliable usable context. The "lost in the middle" phenomenon is well-documented — models tend to over-rely on content at the beginning and end of long inputs. DSA architecture is designed to mitigate this, but independent benchmarks at 1M scale haven't been published yet.

Q: How does DeepSeek V4's 1M compare to Gemini's 1M? Both claim 1M tokens, but architecture differs significantly. DeepSeek uses DSA for dynamic sparse attention; Gemini uses a different approach. Gemini's 1M has been available longer and has more independent testing, but retrieval reliability still degrades past ~70% capacity in some evaluations. V4 is potentially better-architected for long-context retrieval due to Engram's conditional memory, but this remains unverified.

Q: Should I wait for V4 before building a long-context pipeline? Depends on your timeline. If you're building now, V3.2 with 128K is solid and well-tested. If your use case truly needs 1M tokens (e.g., full monorepo ingestion), it's worth waiting for V4's official launch and the first wave of independent benchmarks before committing to infrastructure.

Q: What's the most cost-efficient way to use DeepSeek for long-context tasks? Maximize cache hits by keeping repeated content in a fixed prefix position. Use V3.2's $0.028/M cache-hit rate for any workflow that re-reads the same documents repeatedly. Avoid over-padding — only include context that's genuinely needed for the task.

From next article:

DeepSeek V4 vs R1: Which Model Should You Actually Use?

DeepSeek V4 Parameters: 671B MoE Architecture Explained

DeepSeek V4 Benchmarks: MMLU, HumanEval & SWE-bench

DeepSeek V4 Version History: V3 → V3-0324 → V4 Timeline (2026)