Too Much to Read? How AI Turns Content Into Learning

Hi there, I’m Anna!

Let's be real — this started because I couldn't find a document I'd definitely saved.Somewhere across Pocket, Notion, and a Notes app graveyard was the research I needed. I knew it was there. I'd saved it, felt good about it, and never opened it again.That night I checked Pocket for the first time in weeks. 340 unread items. Closed it immediately.

I'm someone who builds systems for a living. If I couldn't solve this, it wasn't a discipline problem. It was structural. That's what pushed me to actually stress-test how AI handles knowledge digestion — not summarizing, but the conditions where learning actually sticks.Here's what held up. And what didn't.

The Real Problem: It's Not That You're Lazy

Let me be honest with you. The reading backlog problem isn't a motivation problem. It's a structural one. And most people solving it are solving the wrong thing.

Why the reading backlog keeps growing

Here's the thing nobody says out loud: we save content as a way to feel productive without doing the hard work of processing it. The save button is a dopamine hit. The actual reading is friction.

But the deeper issue is volume. The amount of content worth reading — genuinely worth reading — has outpaced any human's ability to keep up. Research papers, industry newsletters, long-form threads, technical documentation. It doesn't slow down.

I was saving roughly 20–30 pieces a week. Reading maybe 3. Processing, in any meaningful way that changed how I worked? Maybe 1. That's a brutal ratio.

The difference between saving content and learning from it

Saving is not learning. Skimming is not learning. Even reading is not always learning.

Learning requires something more uncomfortable: engaging with the material, questioning it, connecting it to what you already know, and doing something with it. That's the part that breaks down.

This is where I started experimenting. Not with "can AI summarize this?" — summaries are fine but they don't stick. I wanted to know: can AI reconstruct the conditions for actual learning?

What AI Can Do Beyond Summarizing

Most people stop at summaries. That's the lowest-value use of AI for knowledge work. Let me show you what I found when I pushed further.

Breaking hard content into digestible, interactive segments

The moment something clicked for me: I stopped asking AI to summarize and started asking it to teach me.

This approach mirrors findings from worked-example learning theory (Sweller, Cognitive Load Theory), which shows that guided explanations significantly improve understanding of complex material.

Take a dense 40-page research report. Instead of "summarize this," I'd ask: "Explain section 3 like I understand the basics but have never seen this methodology before. Then give me two examples that would make this concrete."

The output quality difference is significant. The AI has to slow down, construct an explanation, find analogies. That process produces something closer to what a tutor would give you — not what a highlighter would give you.

This works because information overload isn't really about volume. It's about the absence of a processing layer between raw content and your brain. AI can be that layer — if you ask it to be.

Creating questions, prompts, and memory anchors from your materials

Here's a technique I've been running for about six weeks: after uploading a document, I ask the AI to generate five questions I should be able to answer if I actually understood this. Then I try to answer them without looking at the output.

This is basic retrieval practice — one of the most well-documented learning techniques in cognitive science research. And AI makes it trivially easy to generate custom question sets from any document.

The AI becomes a testing mechanism, not just a reading aid. That's a meaningfully different use case.

How to Actually Use AI for Knowledge Digestion

I've run enough iterations to have a rough framework that doesn't fall apart in practice.

Step 1 — Choose what's worth processing deeply

Not everything deserves deep processing. Most content deserves to be discarded. The first filter isn't AI — it's you deciding what actually warrants your attention.

I now run a two-minute pre-check before anything goes into my AI workflow: Does this change how I think about something I'm actively working on? If the answer is "maybe later," it goes in the archive. Not the queue.

AI amplifies your processing — it doesn't replace your judgment about what's worth processing.

Step 2 — Give it context, not just the raw document

This is where most people leave performance on the table. They paste a document and say "summarize." Instead, tell the AI who you are and why this matters to you.

Example prompt I actually use: "I'm building an AI-assisted workflow for content research. This paper is about [topic]. I want to understand the methodology well enough to evaluate whether it applies to my use case. Focus on sections 2 and 4."

The output changes meaningfully. Context transforms a generic summary into a situated analysis. That's the difference between reading and learning.

Step 3 — Engage with it rather than just reading the output

This is the one people skip. They get the AI output, read it, close the tab, and move on. Nothing sticks.

Engagement looks like: asking follow-up questions, pushing back on claims, asking for alternative interpretations, requesting examples from different domains. Treat the AI like a knowledgeable person you're having a conversation with — not a document to be consumed.

I've started keeping a running note of "things I questioned the AI on" per document. Reviewing that is more useful than re-reading the summary.

According to Anthropic's model documentation, conversational back-and-forth significantly improves the contextual quality of responses — which aligns with what I've observed in practice.

What This Doesn't Fix

I want to be clear here, because the hype around AI learning tools doesn't always acknowledge limits.

AI can't make you care about a topic

I ran an experiment: I tried to use AI to help me process content on a topic I fundamentally didn't care about. The sessions were technically fine. Nothing stuck. I retained almost nothing a week later.

Motivation precedes method. AI can lower the friction of processing — it can't manufacture genuine interest. If the content isn't relevant to a real problem you're working on, no tool will save you.

Depth still requires your active time

Here's what I was hoping wasn't true and turned out to be: there's no shortcut to depth. You can compress time. You can improve signal-to-noise. You can get a better starting point. But genuine understanding of complex material still requires extended engagement.

The AI-assisted version is faster than reading alone. It's not instantaneous. Expecting that leads to disappointment — and worse, the illusion of having learned something you haven't.

Tools Worth Trying (With Honest Limits)

OpenMAIC — interactive learning sessions from your documents

OpenMAIC is the tool I've gotten the most consistent value from in this specific use case. You upload a document and it generates interactive sessions — questions, explanations, structured review — rather than a static summary.

What works: The interactive format forces engagement. The question generation is genuinely useful for retention. The pacing is better than trying to manage this manually in a chat interface.

What doesn't: It's not great at handling highly technical domain-specific content. Mathematical papers, for instance, get processed at a surface level. And if your document is poorly structured, the output reflects that.

Honest verdict: worth trying if you have a genuine reading backlog problem and you're willing to actually engage with the sessions rather than just generate them.

NotebookLM — research-style Q&A, not structured learning

NotebookLM from Google is excellent at one thing: letting you interrogate a document like a research assistant. Ask it where a specific claim appears, ask it to compare two sections, ask it to find contradictions.

Where it's strong: Multi-document research. If you're synthesizing across five sources, NotebookLM is the better tool.

Where it falls short: It's not designed for learning. There's no structured progression, no retrieval practice, no pacing. You get answers to questions you ask — which means if you don't know what to ask, you won't get much out of it.

When neither is the right fit

If you're dealing with highly visual content, content requiring domain expertise to evaluate, or material where the doing matters more than the reading — neither tool will help much.

The right fit is content that's primarily text-based, conceptually dense, and relevant to a real problem you're actively working on. Outside that window, you're better off with a different approach entirely.

A broader look at personal knowledge management with AI confirms this pattern: the tools that stick are the ones matched to specific workflows, not adopted wholesale as a replacement for thinking.

FAQ

Q1: Is AI actually useful for knowledge management, or is it just another distraction?

It's genuinely useful — but only if you change how you interact with it. Passive use (paste, read output, close tab) produces almost no lasting value. Active use (engage, question, retrieve) can meaningfully improve how much you retain from complex material.

Q2: What's the difference between AI knowledge management and just using ChatGPT to summarize things?

Summarization is the lowest-value use. AI knowledge management means using the model to generate questions, create structured review sessions, provide situated explanations, and support active retrieval. That's a fundamentally different workflow.

Q3: How long does an AI-assisted learning session actually take?

In my experience: 20–40 minutes per substantial document if you're engaging properly. That's faster than reading carefully, but it's not instant. Budget real time.

Q4: Can I use this for technical content — papers, documentation, code?

Yes, but with caveats. AI handles conceptual technical content well. It handles mathematical proofs, proprietary systems, and highly domain-specific jargon less well. Always verify technical claims independently.

Q5: What's the single biggest mistake people make with AI learning tools?

Reading the output instead of engaging with it. The output is a starting point for a conversation, not a destination. If you're not asking follow-up questions, you're leaving most of the value on the table.

Previous posts:

- What Is OpenMAIC? A New Way to Turn Documents Into Interactive Learning

- Too Many AI Tools, No Results? Here’s What’s Actually Going Wrong

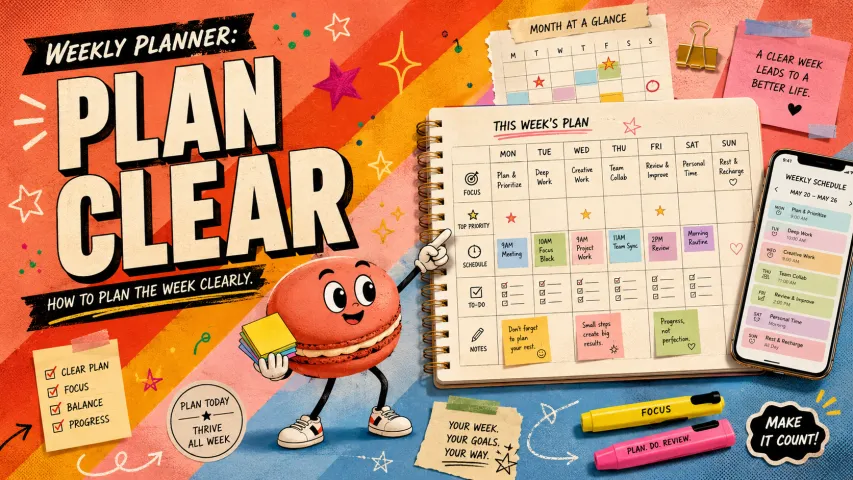

- Macaron App: Using AI Tools in Daily Life Without the Overwhelm

- GLM-5 vs DeepSeek vs GPT-5: Choosing the Right Personal AI

- Claude Opus 4.6 Use Cases: Where It Actually Delivers Value