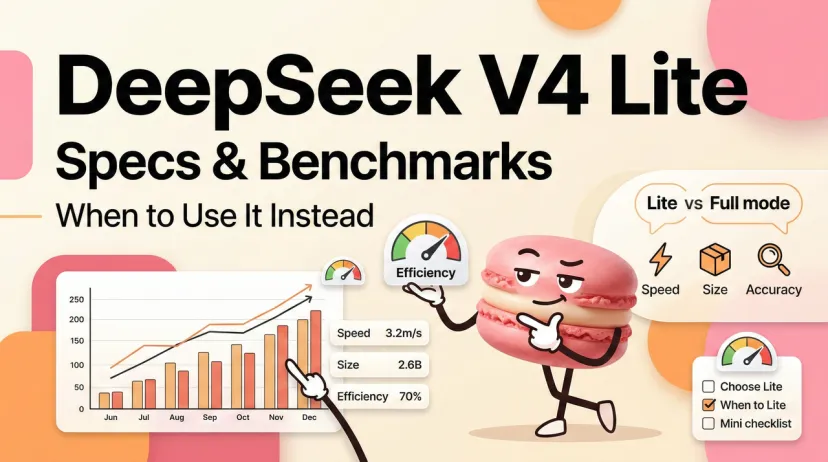

DeepSeek V4 Lite: Specs, Benchmarks & When to Use It Instead

Hey fellow cost-per-token optimizers — if your first question when a new model drops is "okay, but what does the Lite version look like," you're in the right place.

I've been tracking the V4 rollout since the benchmark leaks started circulating in February. V4 Lite surfaced through inference providers under NDA, got spotted in production test traffic as sealion-lite, and the community has been piecing together the picture ever since. Here's what the data actually says — and more importantly, the decision framework for when Lite wins.

What Is DeepSeek V4 Lite?

The pre-release version of DeepSeek V4 Lite, codenamed "Sealion Lite," supports native multimodal processing and a 1M-token context window. That's the same headline context number as full V4 — not a downgrade.

In terms of parameter scale, V4 Lite is expected to have approximately 200 billion parameters, while the full version of V4 may exceed 1 trillion. This is the core trade-off: roughly 80% fewer total parameters, which translates directly into lower inference cost and higher throughput.

A "DeepSeek-V4-Lite" or "Coder-33B" variant is expected shortly after the full V4 launch — designed to fit on a single consumer GPU with 24GB VRAM. The self-hosting angle here is real: if you're running local inference, Lite is likely the version you can actually deploy without a multi-GPU setup.

The V4 Lite variant that leaked through inference providers under NDA showed breakthrough SVG code generation. These claims remain unverified by independent benchmarking. Worth noting that caveat — the SVG results are real, but broader benchmark coverage is still thin until official release.

V4 Lite vs V4 Full — Specs Table

The table below combines confirmed architecture details with pre-release estimates. Full V4 specs are from reporting by the Financial Times, Reuters, and TechNode. Lite specs are based on the Sealion Lite leak and community analysis. Treat Lite figures as estimates until official release.

DeepSeek V4 is a trillion-parameter MoE model with ~32B active parameters per token — a 50% increase in total model size over V3.2 while reducing active parameters from 37B to ~32B. Lite mirrors this efficiency-first design at a smaller scale.

Benchmark Comparison

Coding Tasks

Unverified reports claim V4 scores 90% on HumanEval versus Claude 88% and GPT-4 82%, and exceeds 80% on SWE-bench Verified. These remain internal claims pending independent verification.

In technical demonstrations, V4 Lite rendered a detailed Xbox controller using 54 lines of code and a multi-element scene in 42 lines — outputs described as outperforming DeepSeek V3.2, Claude Opus 4.6, and Gemini 3.1 in code optimization and logical organization. Again: unverified, from NDA-bound testers.

What's confirmed and independently verifiable is the V3.2 baseline. DeepSeek's V3.2-Exp achieved a Codeforces rating of 2121 (up from 2046 in V3.1-Terminus), while BrowseComp improved from 38.5 to 40.1. MMLU-Pro held at 85.0 and AIME 2025 improved to 89.3. V4 and V4 Lite build on this foundation.

Projected benchmark table (treat as estimates):

General Reasoning

V4's reasoning gains come primarily from three architectural innovations: Manifold-Constrained Hyper-Connections (mHC), Engram conditional memory, and DeepSeek Sparse Attention with a Lightning Indexer. Lite is expected to retain Engram and DSA but likely skips the full mHC implementation — which is where the reasoning gap between full and Lite will show most clearly on complex multi-step tasks.

For classification, summarization, extraction, and single-step coding: the gap is likely small. For complex agentic reasoning chains or multi-file refactors requiring sustained coherence: use full V4.

Latency & Throughput

No official benchmarks yet. Based on the parameter ratio (~5:1 full to Lite) and patterns from prior DeepSeek model generations, reasonable estimates:

The throughput gap matters most for two scenarios: real-time user-facing interfaces where latency is visible, and high-concurrency pipelines where you're hitting the API with many parallel requests. Lite wins both.

Price Per Million Tokens

Current official pricing from DeepSeek's API docs for the V3.2 generation (the active API baseline as of March 2026):

The deepseek-chat model (DeepSeek-V3.2, non-thinking mode) is priced at $0.028 per 1M input tokens (cache hit), $0.28 per 1M input tokens (cache miss), and $0.42 per 1M output tokens.

V4 and V4 Lite pricing is not yet confirmed. Projected based on DeepSeek's historical pricing trajectory — each generation has been meaningfully cheaper than the last:

The key number: if V4 Lite prices near current V3.1 levels ($0.14–0.15/M input), you're looking at a ~2–3x cost reduction versus full V4 while retaining the same 1M context window. For high-volume pipelines, that difference compounds fast.

Quick math: at 50M tokens/day input (cache-miss rate 30%):

- V4 Full at $0.45/M: ~$4,725/month

- V4 Lite at $0.13/M: ~$1,365/month

Best Use Cases for Lite

High-Volume Pipelines

Any workload where you're processing large numbers of short-to-medium requests and the output quality gap between Lite and Full is within acceptable tolerance. The threshold I use: if you'd be happy running V3.2 today on a task, you'll be happy running V4 Lite.

Strong Lite fits:

- Document classification at scale

- Automated code review comments (not full rewrites)

- Batch summarization

- Extraction pipelines (named entity, structured data pull)

- Customer support triage and draft responses

- A/B testing prompt variants across many inputs

For these tasks, the cost reduction from Lite is real and the quality delta is minimal.

Edge / Low-Latency Apps

A Lite variant designed to fit on a single consumer GPU (24GB VRAM) opens serious self-hosting possibilities for teams that need air-gapped environments, can't send data to external APIs, or just want inference latency measured in hundreds of milliseconds rather than seconds.

Lite use cases where latency is the deciding factor:

- Autocomplete and inline coding suggestions (IDE plugins)

- Real-time chat interfaces where users see every token stream

- Mobile or edge deployments with constrained hardware

- High-frequency agent loops where TTFT compounds across many turns

The rule of thumb: if a human would notice the delay, use Lite. If the task runs in the background, full V4 is available.

How to Switch in API

DeepSeek follows the pattern of exposing variants through distinct model strings. Based on V3.x convention, expect:

from openai import OpenAI

client = OpenAI(

api_key="<your api key>",

base_url="https://api.deepseek.com",

)

# Full V4 (expected model string)

response_full = client.chat.completions.create(

model="deepseek-chat", # deepseek-chat maps to current flagship

messages=[{"role": "user", "content": "Your prompt"}],

)

# V4 Lite (expected model string — confirm at launch)

response_lite = client.chat.completions.create(

model="deepseek-chat-lite", # unconfirmed, watch official docs

messages=[{"role": "user", "content": "Your prompt"}],

)

For a routing wrapper that lets you switch based on task type:

def get_model_for_task(task_type: str, token_budget: float = None) -> str:

"""

Route to Lite or Full based on task requirements.

token_budget: max acceptable cost per 1M tokens (output)

"""

LITE_TASKS = {"classify", "summarize", "extract", "triage", "draft"}

FULL_TASKS = {"refactor", "reason", "debug_complex", "architect"}

if task_type in LITE_TASKS:

return "deepseek-chat-lite"

if task_type in FULL_TASKS:

return "deepseek-chat"

# Cost-based fallback

if token_budget and token_budget < 0.30:

return "deepseek-chat-lite"

return "deepseek-chat" # default to full

# Usage

model = get_model_for_task("summarize")

response = client.chat.completions.create(

model=model,

messages=[{"role": "user", "content": "Summarize this document..."}],

)

Build this abstraction now — it works with V3.2 today and will slot V4 Lite in the moment the model string is confirmed. Monitor the DeepSeek API changelog for the exact model identifiers.

If you want to estimate whether Lite actually fits your workload before committing — track your current token volumes, latency requirements, and task types in one place, then run the cost comparison against your real usage. Macaron lets you save your analysis templates and token tracking setups so the next model evaluation takes minutes, not a full afternoon. Try it free at macaron.im.

FAQ

Q: Is V4 Lite officially confirmed?

Not by DeepSeek directly. The "V4 Lite" test version, known as "sealion-lite," is currently in testing with a 1M-token context window and native multimodal architecture. Official confirmation and model strings are expected at or after full V4 launch.

Q: Does V4 Lite support tool calling?

Expected yes, based on DeepSeek's pattern — every V3.x variant including the experimental and speciale builds supported tool calling. Until official docs confirm, treat it as likely but unverified.

Q: Can V4 Lite actually run on a single RTX 4090?

Analysts expect a variant designed to fit on a single consumer GPU with 24GB VRAM. At ~200B parameters in MoE, only ~20–25B are active per forward pass, which is within the memory budget of a 4090 at 4-bit quantization. Self-hosting feasibility depends on the final quantization options released.

Q: When will V4 Lite be available via the official API?

DeepSeek plans to release V4 this week (week of March 2, 2026). Lite variants in DeepSeek's history have followed the flagship by days to weeks, not months. Watch the official API docs and changelog for the model string.

Q: Should I wait for V4 Lite before building on V3.2?

No. Build a router/gateway abstraction now so adopting V4 Lite later is a low-risk switch. The routing wrapper above costs an hour to implement and lets you swap model strings without touching application logic. That's the right posture regardless of release timeline.

Q: What's the actual quality gap between Lite and Full for coding?

Leaked demonstrations show V4 Lite producing more optimized code than V3.2, Claude Opus 4.6, and Gemini 3.1 on SVG generation tasks. For standard coding tasks — code completion, function generation, refactoring with clear instructions — Lite is expected to match or beat V3.2. The gap opens on complex multi-file reasoning and long-chain agentic tasks where the full V4's mHC architecture has a structural advantage.

From next article:

DeepSeek V4 Version History: V3 → V3-0324 → V4 Timeline (2026)

DeepSeek V4 Context Window: 128K vs 1M Tokens

DeepSeek V4 API: Rate Limits, Auth & Quickstart (2026)